Introduction

Snowflake just recorded the largest IPO in history – confirming the demand for high-performance data warehousing in the enterprise analytics space. Snowflake’s offering is extremely attractive upfront. It is easy to use with a relatively fast and seamless installation process. On smaller scale traditional OLAP workloads, users experience great performance with a lulling consumption based pricing model. Seems like a grand slam – until it’s time to scale. As enterprises move their industrial grade workloads to Snowflake, performance plummets, costs unpredictably surge, and users are forced to throw more money and compute at their workloads to maintain SLAs. Here we will highlight the key architecture differences between Snowflake and Kinetica, break down the most common challenges users face with Snowflake, and provide insight into why a vectorized streaming data warehouse is the optimal architecture for advanced analytics at scale.

Workload Management

Workload management is one of the more commonly criticized flaws in Snowflake’s offering. Snowflake can be broken down into three layers: Storage, Compute, and Services. The storage layer is a RDBMS with columnar format used to store structured and semi-structured data. The compute layer consists of “virtual warehouses” that all share the same storage layer and the services layer among other responsibilities, manages all communication between virtual warehouses. Now, if a user wants to pin point certain workloads to maximize performance, the only real option is to spin up another virtual warehouse in the compute layer. This is a very rigid approach that drives up costs especially as data volumes increase. Kinetica offers a solution to this issue in its tiered storage capabilities. Kinetica can still be used in a single data model approach and is capable of referencing and creating tables from externally managed sources like HDFS, S3, Blob storage etc. but with tiered storage, administrators have the ultimate flexibility and control to manage their workloads across their resources. Kinetica optimally moves data between cold storage, local persistence, and in-memory caching to ensure maximum performance during query processing. For example: tables that are only accessed every 6 months do not need to be living in memory. That would be incredibly expensive and would consume capacity from other workloads that may need vectorized computational performance. Users can define a tiering strategy to their data and Kinetica will automatically facilitate the data movement between tiers. Tiered storage can also be used as a resource management tool where users can define how much of each tier individual workloads and even users can access. A great real world example of tiered storage in action is with one our pharmaceutical partners who is operating on over 1 trillion data points and still obtaining sub-second response queries.

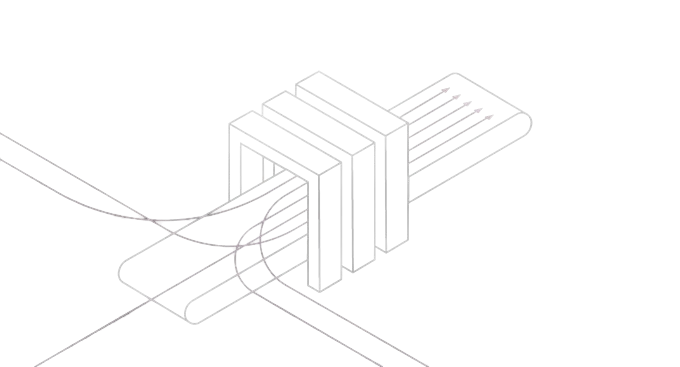

Kinetica’s Tiered Storage Differentiates from In-Memory Solutions Enabling Petabyte Scale Analytics at Feasible Costs

Caching vs. Memory-First Computation

Another major challenge users face with Snowflake is performance at scale with more advanced analytics. At its core, Snowflake is built on a caching architecture which works really well on small scale data sets or repetitive traditional queries. This architecture starts to fall down as data volumes expand or the workload complexity increases. In the emerging space of advanced analytics, where machine learning, artificial intelligence, graph theory, geospatial analytics, time-series analysis, adhoc analysis, and real-time analytics are becoming predominant in every enterprise – data sets are typically larger and workloads are becoming much more complex. Large table scans, complex joins, and adhoc analysis against an entire corpus of data does not bode well for a cache based architecture. This is Kinetica’s unique sweet spot. Kinetica still leverages caching with SQL results cache and shadow cube, but with highly distributed, vectorized architecture Kinetica is able to tackle complex, large scale analysis. With a parallel orchestration engine, Kinetica vectorizes workloads across multiple cores and processors depending on what the optimal compute target is for that workload with kernels that have been written from the ground up. For those full table scans, complex joins, and large aggregates, Kinetica leverages that vectorized computing for accelerated performance. However, unlike traditional in-memory solutions, Kinetica can handle petabyte scale workloads by optimally moving data between memory, local persistence, and cold storage with its tiered storage capabilities mentioned above. This architecture is what uniquely positions Kinetica as the most performant and cost effective data warehouse in the industry today, for any type of large scale, advanced analytical workloads.

Kinetica’s highly distributed, columnar, vectorized architecture is extremely performant at scale on large data sets and complex analytical queries.

Real-Time Analytics

Snowflake struggles with any type of event driven analysis for several key reasons. First and foremost, Snowflake is optimized for read-only workloads on stateless data. Any type of real-time inferencing, data enrichment or decision making against high-velocity streaming data will be completely impractical. Apart from its inability to perform any type of write intensive operations comes another issue: the overall approach to actually stream data into Snowflake. Snowpipe is a tool that is advertised as Snowflake’s continuous ingest tool. “Snowpipe loads data within minutes after files are added to a stage and submitted for ingestion.” What this means is that first, data must be loaded to a staging area like S3, Blob Storage or Google’s Cloud Storage. After that data hits the staging area an event is triggered to submit a request to be ingested, virtual warehouses are spun up to perform the ingest and minutes after that request is made the ingest occurs at a cost to the end user. This workflow creates heavy latency, slower throughput and incurs multiple hidden costs.

Kinetica’s Unique Ability to Subscribe Directly to Real-Time Data Feeds Enables Developers to Analyze High-Velocity Data with Minimal Latency

Kinetica offers a truly differential approach to real-time analytics. With an ability to subscribe directly to incoming streams, and a lockless, distributed architecture, developers can query fast moving data as soon as it hits a Kinetica table. Data scientists can train and deploy their models natively, making real-time inferences through the use of out-of-the box, automated table monitors. Real-time geospatial analytics, time-series analysis, and graph theory can be executed against various sources of streaming data as they hit the underlying relational tables within Kinetica and all of these operations are backed by Kinetica’s highly distributed vectorized architecture.

Price Predictability

When we talk about price the conversation usually diverges in two directions. How much do I need to pay in licensing to obtain this type of performance and functionality, and what is the total cost of ownership incurred by compute and storage to power the application? With Kinetica – it’s incredibly straightforward and predictable. Lets first examine the alternative offering in Snowflake’s version of a consumption based pay-as-you-go model. Not only are there huge hidden costs associated with additional compute as you scale, but also additional hidden costs as you tackle different fields of analysis. For example, if you want to perform any type of graph analysis with your data, you are now going to have to evaluate and pick a separate technology, pay for the licensing, compute, and storage underlying that technology, and migrate data to and from each framework. The same principle applies to any type of machine learning or geospatial workloads.

Kinetica takes a different approach to pricing placing TCO in the hands of the customer. Users get the flexibility of a consumption model with the predictability of a subscription license. Kinetica offers world class connectors for large batch or streaming analysis, a push-button AI/ML platform, patented graph solvers, and the most comprehensive and performant geospatial library available to date. With Kinetica, every analytical capability is included in the subscription with zero hidden costs. We encourage our partners to use more and do not penalize them during bursting periods of high use. With a very transparent pricing model, and liner scalability, customers know exactly what they are buying into and have complete flexibility and control over their costs.

We welcome anyone that is reading this who is facing performance issues or a high total cost of ownership to bring your problem, bring your data, bring your technology of choice and benchmark that against Kinetica to see the difference.

For more in depth information check out the upcoming tech talk with Kinetica’s CEO Nima Negahban http://go.kinetica.com/when-snowflakes-become-a-snowstorm